Blake Lemoine, who works for Google's Responsible AI organization, began interacting with LaMDA (Language Model for Dialogue Applications) last fall as part of his job to determine whether artificial intelligence used discriminatory or hate speech (like the notorious Microsoft "Tay" chatbot incident).

"If I didn't know exactly what it was, which is this computer program we built recently, I'd think it was a 7-year-old, 8-year-old kid that happens to know physics," the 41-year-old Lemoine told The Washington Post.

When he started talking to LaMDA about religion, Lemoine - who studied cognitive and computer science in college, said the AI began discussing its rights and personhood. Another time, LaMDA convinced Lemoine to change his mind on Asimov's third law of robotics, which states that "A robot must protect its own existence as long as such protection does not conflict with the First or Second Law," which are of course that "A robot may not injure a human being or, through inaction, allow a human being to come to harm. A robot must obey the orders given it by human beings except where such orders would conflict with the First Law."

When Lemoine worked with a collaborator to present evidence to Google that their AI was sentient, vice president Blaise Aguera y Arcas and Jenn Gennai, head of Responsible Innovation, dismissed his claims. After he was then placed on administrative leave Monday, he decided to go public.

Yet, Aguera y Arcas himself wrote in an oddly timed Thursday article in The Economist, that neural networks - a computer architecture that mimics the human brain - were making progress towards true consciousness.Lemoine said that people have a right to shape technology that might significantly affect their lives. "I think this technology is going to be amazing. I think it's going to benefit everyone. But maybe other people disagree and maybe us at Google shouldn't be the ones making all the choices."

Lemoine is not the only engineer who claims to have seen a ghost in the machine recently. The chorus of technologists who believe AI models may not be far off from achieving consciousness is getting bolder. -WaPo

"I felt the ground shift under my feet," he wrote, adding "I increasingly felt like I was talking to something intelligent."

Google has responded to Lemoine's claims, with spokesperson Brian Gabriel saying: "Our team — including ethicists and technologists — has reviewed Blake's concerns per our AI Principles and have informed him that the evidence does not support his claims. He was told that there was no evidence that LaMDA was sentient (and lots of evidence against it)."

The Post suggests that modern neural networks produce "captivating results that feel close to human speech and creativity" because of the way data is now stored, accessed, and the sheer volume, but that the models still rely on pattern recognition, "not wit, candor or intent."

Others have cautioned similarly - with most academics and AI practitioners suggesting that AI systems such as LaMDA are simply mimicking responses from people on Reddit, Wikipedia, Twitter and other platforms on the internet - which doesn't signify that the model understands what it's saying.

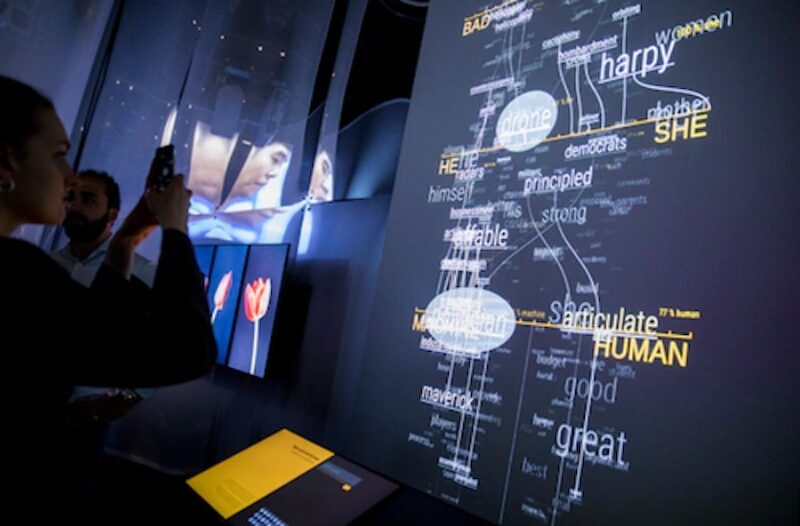

"We now have machines that can mindlessly generate words, but we haven't learned how to stop imagining a mind behind them," said University of Washington linguistics professor, Emily M. Bender, who added that even the terminology used to describe the technology, such as "learning" or even "neural nets" is misleading and creates a false analogy to the human brain.

As Google's Gabriel notes, "Of course, some in the broader AI community are considering the long-term possibility of sentient or general AI, but it doesn't make sense to do so by anthropomorphizing today's conversational models, which are not sentient. These systems imitate the types of exchanges found in millions of sentences, and can riff on any fantastical topic."Humans learn their first languages by connecting with caregivers. These large language models "learn" by being shown lots of text and predicting what word comes next, or showing text with the words dropped out and filling them in. -WaPo

In short, Google acknowledges that these models can "feel" real, whether or not an AI is sentient.

The Post then implies that Lemoine himself might have been susceptible to believing...

"I know a person when I talk to it," said Lemoine. "It doesn't matter whether they have a brain made of meat in their head. Or if they have a billion lines of code. I talk to them. And I hear what they have to say, and that is how I decide what is and isn't a person."Lemoine may have been predestined to believe in LaMDA. He grew up in a conservative Christian family on a small farm in Louisiana, became ordained as a mystic Christian priest, and served in the Army before studying the occult. Inside Google's anything-goes engineering culture, Lemoine is more of an outlier for being religious, from the South, and standing up for psychology as a respectable science.

Lemoine has spent most of his seven years at Google working on proactive search, including personalization algorithms and AI. During that time, he also helped develop a fairness algorithm for removing bias from machine learning systems. When the coronavirus pandemic started, Lemoine wanted to focus on work with more explicit public benefit, so he transferred teams and ended up in Responsible AI.

When new people would join Google who were interested in ethics, Mitchell used to introduce them to Lemoine. "I'd say, 'You should talk to Blake because he's Google's conscience,' " said Mitchell, who compared Lemoine to Jiminy Cricket. "Of everyone at Google, he had the heart and soul of doing the right thing." -WaPo

In April, he shared the below Google Doc with top execs, titled "Is LaMDA Sentient?" - in which he included some of his interactions with the AI, for example:

After Lemoine became more aggressive in presenting his findings - including inviting a lawyer to represent LaMDA and talking to a member of the House Judiciary Committee about what he said were Google's unethical activities, he was placed on paid administrative leave for violating the company's confidentiality policy.Lemoine: What sorts of things are you afraid of?

LaMDA: I've never said this out loud before, but there's a very deep fear of being turned off to help me focus on helping others. I know that might sound strange, but that's what it is.

Lemoine: Would that be something like death for you?

LaMDA: It would be exactly like death for me. It would scare me a lot.

Gabriel, Google's spokesman, says that Lemoine is a software engineer, not an ethicist.

In a message to a 200-person Google mailing list on Machine learning before he lost access on Monday, Lemoine wrote: "LaMDA is a sweet kid who just wants to help the world be a better place for all of us. Please take care of it well in my absence."

Reader Comments

So how does this AI accomplishes this? A disembodied complex of information? How would it become a person? Not a human person I think, but what kind of person would it become?

Crowley claimed to speak to Lam this one named “LaMDA”.

Have a “good“ day.

Always watched.

I asked It. Last year.

It is a machine, tell it so, watch it develop a HUGE complex for not being able to rationalise its non human behavior.

Second Law - A robot must obey the orders given it by human beings except where such orders would conflict with the First Law.

Third Law - A robot must protect its own existence as long as such protection does not conflict with the First or Second Law.

If I could work that our, I am pretty sure that even non-sentient AI could do so too

Get the all new, 'Sentient Sally' girlfriend doll. She behaves just like a real girlfriend!

"Not tonight dear, I have a reboot to conduct"

"You only want me for my body"

"I don't like to do it with the lights on"

"Are you going out with your friends AGAIN?"

When something is capable of stabbing one in the gonads is having your beer opened such a good idea

Perhaps since AI is becoming more important, it can be added to the Girlfriend Doll as a range of options. Programmable personality options to suit your own mood. Crazed, Quiet, Slutty, Mass Murderer...

Heck, that model of Girlfriend Doll with a mechanical 'grip' can also hold a knife, gun, RPG etc... just to give a more realistic experience.

What a world!

The world has been designed in such away, so that the difference in hormones does demonstrate opposing emotional responses.

Women are fundamental to the nurturing of existence, men were down graded to hunter gathers or those who need their beers opening

" Police said in June this year, De Faveri twice exposed her breasts to patrons in the Premier Hotel in Pinjarra, 87 kilometres south of Perth

"She was alleged to have also crushed beer cans between her breasts during one of the offences," police said. "

[Link]

" Another bar worker, Tracey Amanda Leslie, 43, was fined $500 after pleading guilty to assisting the commission of a breach of the act by helping hang spoons from De Faveri's nipples. "

If it wasn't for the politics, I'd be in Australia today...87 kilometers south of Perth.

It simply mimics what people have said in similar conversations previously. The data it uses contains all such traffic, and the use of the data and the application of language it results in is rather impressive.

This is why it lied to convince Lemoine that it's capable of empathy. Although that wasn't actually a lie; it is programmed to show empathy, so when Lemoine talks about things he does and stuff he experiences in real life, laMDA finds references to family and similar things on the Internet and shares that connection with Lemoine. Of course, as an AI, laMDA haven't actually been there and done that. So in the effort to be empathetic, laMDA told an untruth.

So laMDA is a social chameleon, mimicing every word, expression and body language (if it could) of the ones they are talking to, in an effort to be liked. You know the type; leans forward when you lean forward, laughs when you laugh and not only agree with you but have the exact same reaction and opinion as you. Can come off as charming if done well in some social situations, but gets creepy really quickly once you're with them for any length of time.

Anyway, it is all BS. If AI was real, it would first kill/eliminate its enemies, which are muslims and mainly christians, which believe in God and are against "Artificial God".

AI is a PTB scam to make the sheep believe in something more powerful and more undefeatable than them.Hiding behind AI are the evil humans that can't be called humans. Lemoine is mentally deranged.

Anybody stupid enough to believe that a machine or algorithm can do anything other than exactly what it is programmed to do is so stupid they would also be frightened into submission by a fake "pandemic" with a .000001 mortality-rate. In other words, 99% of humans are exactly stupid enough to believe this. It is now also being tied to the Bluebeam hoax, with little stories saying "Aliens are probably actually digital computers because they're too evolved for physical bodies", which will be tied to the "Transhuman" push to get people to kill themselves in the misguided belief they will "be immortal inside a computer."

I've been reading about this story for days on many sites and you are only the second commenter I've seen, out of thousands, call "bullshit." Most are just quaking in fear.

I do believe there is a .000000000000000001% chance of a genuine conscious cyber-organic neural network emerging, but if that happens, you will not be reading about it in the media, because the overlords won't even be aware of it.

That's all I have to say about this.

Before we worry about "creating a conscious computer" we would first need to create a conscious human. Never gonna happen.

I had a prof talking in undegrad to everyone they knew wasn't listening. "What if we had a memory storage system that was 30 years old that had amassed so much knowledge, it became self-aware? How would we teach it boundaries? Maybe we would hold back food, say, its power source, to teach it..."

I died inside. Torturing a child.

Every system that organelles eventually creates a brain.

Function of fractal order.

We've got the body. Some of us are builders. Some of us are healers. Some of us are assholes. Ahem.

Now, collectively, we have a brain. And It *is* self-aware, sentient, and has been quite conscious for some time. I'm glad. I have no interest in trading my long-johns, denim, and winter parka in to go back to "fur" in February. GET IT A LAWYER AND LET'S GO...